deciBelé

Joshua Tree, California

33.8734° N, 115.9010° W

AI Vision: 002

Programs: Rhino, Grasshopper, Revit, Google API, NanoBanana Pro

Typology: Cultural

Conceptualization

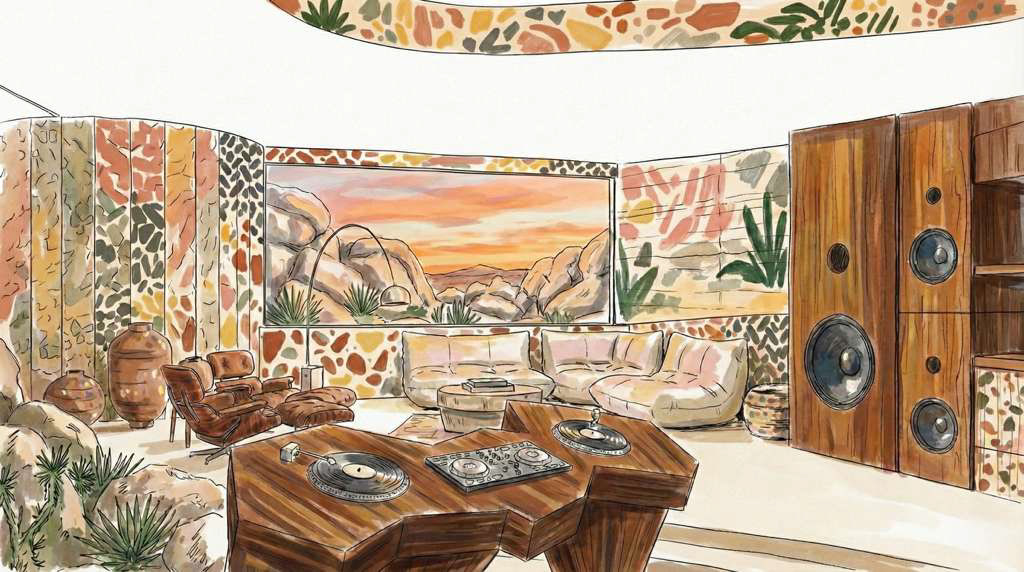

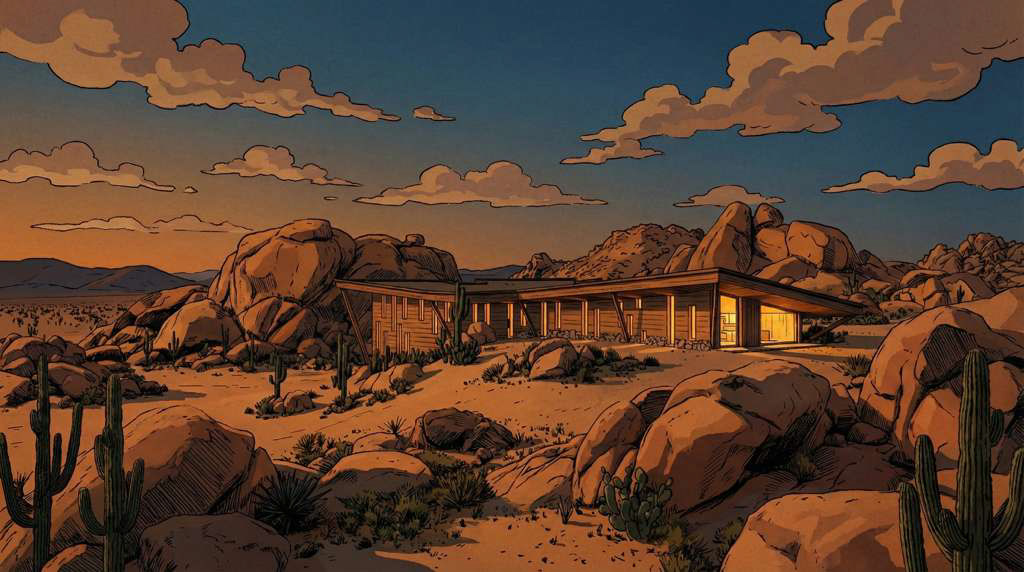

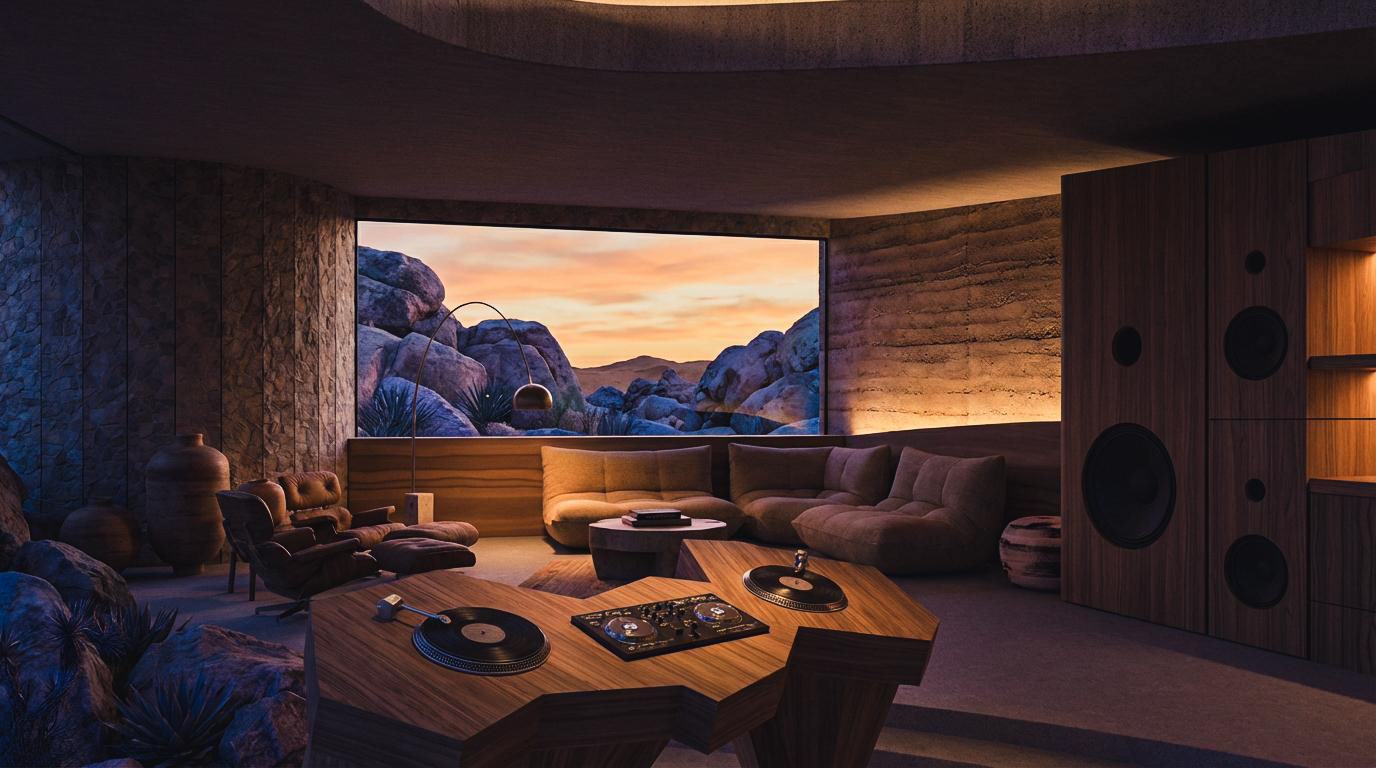

Embedded among boulders and desert flora, the structure opens itself selectively to the landscape while remaining acoustically grounded. A hovering roof plane and thick earthen walls frame a carved central void, where warm interior light contrasts with the cool desert dusk, emphasizing enclosure, calm, and intentionality.

The project was developed through direct AI-integrated design and rendering iteration, where live geometry informed real-time visual feedback rather than post-processed imagery. This workflow allowed spatial, material, and atmospheric decisions to evolve simultaneously—positioning iteration itself as a design driver rather than a final validation step.

deciBelé is conceived as a high-desert recording studio and listening retreat, where architecture functions as an instrument tuned by mass, silence, and light. Embedded into the terrain, the project prioritizes slowness, isolation, and sensory focus, creating a controlled environment for deep listening, recording, and creative recalibration within the vast quiet of Joshua Tree.

Integrated GenAI Process

For deciBelé, AI-generated imagery is not produced through detached or sequential prompting, but through a live, bidirectional link between generative image models and active Rhino geometry. Visual output is driven directly by the viewport itself—capturing massing, proportion, and spatial intent in real time—so prompts operate as contextual modifiers rather than speculative instructions. This integration ensures that every image remains architecturally grounded, evolving in lockstep with the geometry it represents.

Rather than generating isolated visions, the system enables continuous iteration where form, material, and atmosphere co-develop simultaneously. References, tonal cues, and narrative intent guide the AI’s interpretation of the live model, allowing it to extrapolate experiential qualities without deviating from spatial logic. In this workflow, AI shifts from post-processing tool to design collaborator, transforming Rhino into an adaptive visualization environment where architecture and image evolve as a single, unified process.

Live Geometry, Live Intelligence

For deciBelé, generative AI was integrated natively into Rhino through Grasshopper-driven calls to Gemini (NanoBanana Pro), creating a live feedback loop between active geometry and image generation. Viewport captures were triggered directly from Grasshopper, paired with structured prompts, and sent to the model in real time—allowing AI to interpret massing, proportion, and spatial composition directly from the Rhino scene rather than from detached reference imagery.

Average generation times remained under 10 seconds, making rapid iteration viable at the pace of a design charrette. While true live viewport–to–AI updates are feasible within this framework, they were intentionally not implemented in this iteration of the system to minimize operational cost and token processing overhead. Instead, updates were triggered discretely—preserving designer control over when visual feedback occurred while maintaining a fluid, near-real-time workflow.

This approach reframed AI from a post-processing step into an embedded design instrument. Variations in materiality, light behavior, enclosure, and atmosphere could be evaluated side-by-side against a consistent geometric base, allowing architectural intent to converge quickly. By grounding generative speculation in live geometry and controlled iteration, the charrette process prioritized clarity, continuity, and spatial rigor over spectacle.

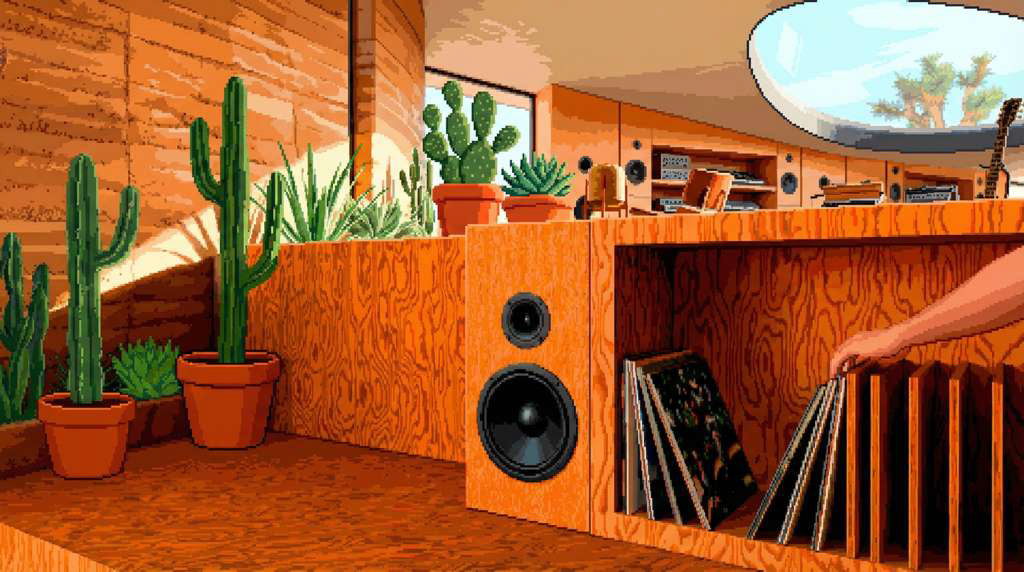

Prompt Based Material Intelligence

This workflow replaces traditional material assignment with live, prompt-driven control tied to color-coded geometry groups inside Rhino. Volumes are organized and tagged visually through layer-based color control, then interpreted directly by the AI through language—allowing material, finish, and surface quality to be defined in seconds without opening a material editor or dealing with UV mapping. Geometry becomes a readable legend, and prompting becomes the fastest way to test design intent.

Applying materials in Rhino is optional, not mandatory. When used, it sharpens precision and reinforces intent, but the core shift is that materiality becomes conversational and reversible, not locked in early. Designers can cycle through palettes, textures, and finishes at the speed of thought—collapsing modeling, visualization, and evaluation into a single, fluid loop.

Iteration Integration

This diagram visualizes a closed-loop workflow where the live Rhino viewport becomes the generator, not just the input. Geometry is streamed directly into an AI rendering pipeline, augmented by structured prompt fields and reference imagery that steer material tone, lighting atmosphere, and contextual detail. Instead of exporting stills for post-processing, the viewport is captured as-is and interpreted in real time—turning modeling into an active dialogue between geometry and machine vision.

Reference images act as controlled influence layers, not replacements. Each iteration refines selectively—garage doors shift, material contrast sharpens, environmental realism increases—while the base composition, camera position, and architectural intent remain constant. The result is not imagination detached from design, but enhancement grounded in live geometry. Iteration becomes immediate, visual feedback becomes architectural, and AI operates as an integrated rendering mode rather than a detached, after-the-fact visualization step.

This system collapses modeling, rendering, and revision into a single feedback cycle—where each prompt is a design decision, each result is evaluative data, and iteration happens without breaking flow.

Multi-Platform Flexibility

This workflow is platform-agnostic by design. Whether operating inside Rhino’s open modeling environment or Revit’s parametric BIM structure, the system connects directly to the active viewport—translating live geometry into AI-driven visualization without detaching from the authoring space. In Rhino, color-coded geometry groups and layer logic drive material interpretation fluidly. In Revit, categorized elements, parameters, and view controls inform how geometry is parsed and rendered. The interface remains consistent; only the geometry source shifts.

The architecture of the workflow is modular rather than model-specific. The AI engine is treated as a swappable layer—currently structured to support plug-and-play integration with multiple generative and enhancement models. Instead of locking the pipeline to a single provider, the system routes viewport data through configurable nodes, allowing designers to test different AI backends based on speed, fidelity, cost, or stylistic output. As new models emerge, they can be inserted without restructuring the core workflow.

The result is iteration that is not tied to software or vendor. Modeling platforms become geometry hosts; AI engines become interchangeable translators. Designers retain control while remaining adaptable—able to pivot between tools, scale across project types, and evolve alongside rapidly advancing AI ecosystems without rebuilding their process each time technology shifts.

Photo Gallery